How Walmart Uses AI

A Case Study

If you want to understand how a giant actually listens to its people, go to a museum.

Specifically: the Walmart Heritage Museum. We helped turn a small corner of it into a living microphone, with thousands of 30‑second videos from associates and guests, piped through an AI pipeline, and answered back as executive‑ready insights. It’s oddly simple, a little weird, and very effective.

Below is the story, the stack, and the receipts.

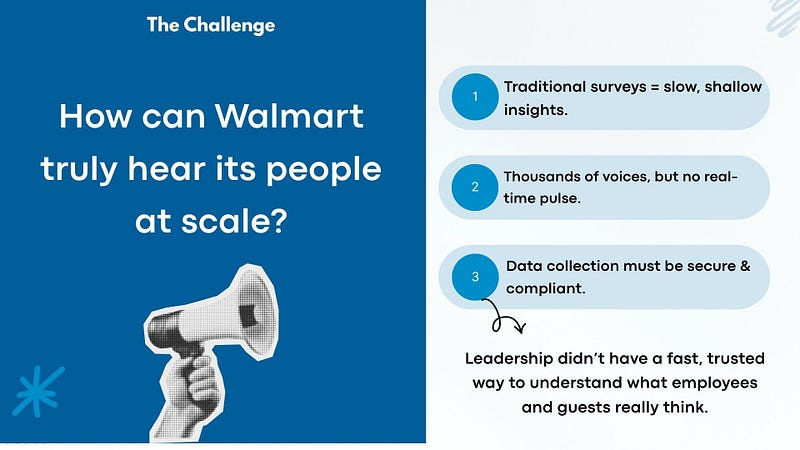

The Problem

“We have a ton of voices but no pulse.”

Leadership needed a way to hear how employees and guests actually felt — about self‑checkout, automation, store tech, robotic deliveries, etc. And they wanted it fast and without the usual survey fatigue.

Also: no PII, tight retention, and it had to be fun enough that real people would participate. That’s the brief we worked under with Bridgewater Studio, Walmart’s exhibit partner.

The Loop

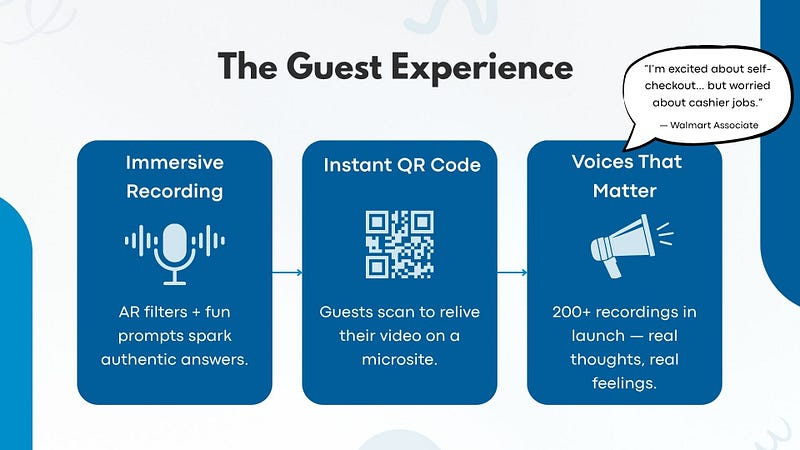

1) Capture voices.

A museum kiosk invites folks to speak for ~30 seconds. AR prompts keep it friendly; the local app handles the recording, legal screen, and upload. After the upload succeeds, it spits out a QR code so the guest can watch their clip on a tiny microsite later — again, no personal data required.

2) Turn speech into data.

In the background: transcribe → classify sentiment → extract topics/keywords. Each clip becomes “topic + tone + example snippet,” which makes later querying sane. (This lives in Walmart’s cloud.)

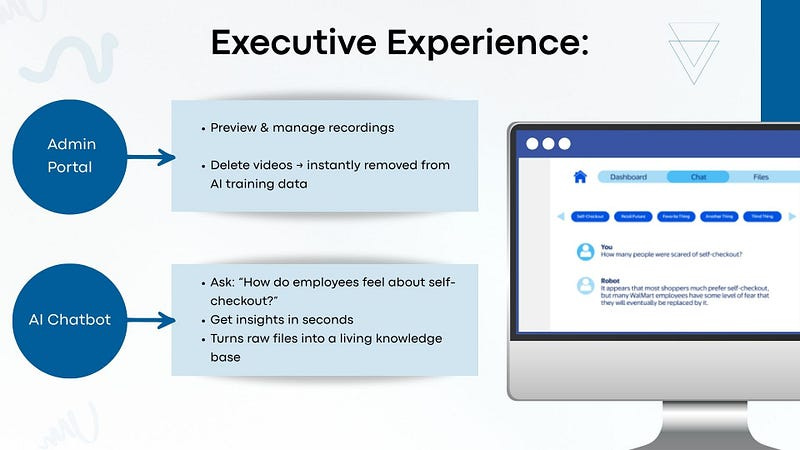

3) Explore insights.

Admins get a clean portal and a conversational dashboard. Ask, “How do employees feel about self‑checkout?” and get defensible roll‑ups with counts, themes, and representative quotes, all sourced back to real clips. Designed for analysis ranging from quick & dirty to deep insights.

At launch we saw recordings stack up quickly (hundreds on day one, built to scale into the hundreds of thousands). The sentiment wasn’t one‑note; people can be excited about self‑checkout and worried about cashier jobs. Both can be true. We found that people will also just say nonsense sometimes, so we had to filter out that noise.

Under The Hood

Capture & handoff. Kiosk → local app → secure API (file hash returned to drive QR microsite).

Storage & retention. Video lands in S3 with a 90‑day retention policy; transcripts and analysis live longer for insight (policy‑driven).

Analysis. Transcribe → sentiment + topic tagging → embed into a search index for fast retrieval (RAG, not slow fine‑tunes).

Admin UI. Browse/preview, search, and — crucially — delete. Deletions trigger re‑index so the model’s answers stop using that item now, not next quarter.

Auth & audit. AWS Cognito federated with Walmart’s IdP (SAML/OIDC) for SSO; activity is logged to CloudWatch + Dynamo audit tables with alerts for anything sketchy.

The SSO/deletion/auditing hardening shipped as Phase 2, a fixed‑price workstream over ~8–10 weeks (IdP approvals are always challenging, especially with a client this large).

What stakeholders actually see

If you look at the internal wireframes and admin screenshots, it’s intentionally boring — in a good way. A login screen (SSO), a dashboard with category counts, a chat tab with topic chips, and a simple file list with “preview / delete.” Executives can ask a question, skim the trend, and move on — two minutes between meetings.

Why this worked (and what we learned)

Meet people where they are. A museum kiosk is low‑friction. Quick prompt, one take, done. QR code lets them watch their story later. Participation goes way up.

Privacy-first or don’t bother. No PII for visitors plus time‑boxed video storage means legal/security isn’t flagging the project as high-risk, less red tape to navigate, and people are more candid in their videos.

Deletion is a product feature. If an admin removes a clip, it should vanish from storage and the assistant’s memory immediately. That’s why we used RAG + re‑index, not a heavy re‑train.

Start SSO early. The config is straightforward; the approvals are not. Kick off IdP federation in week one so the rest of the build doesn’t stall.

The receipts (impact so far)

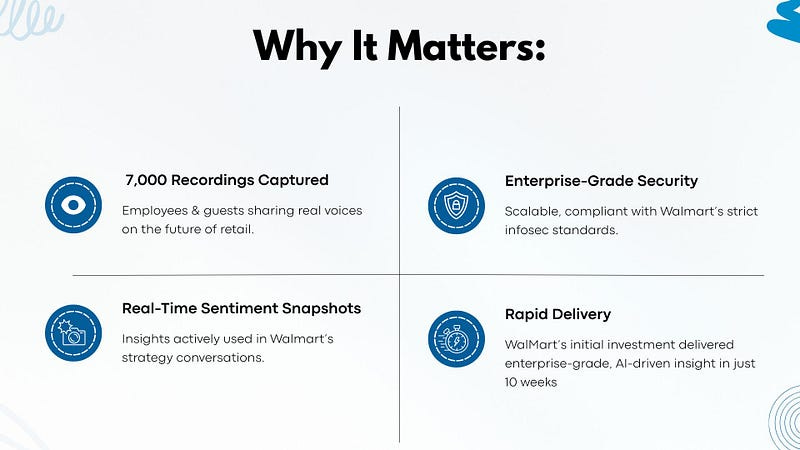

Thousands of authentic voices captured (we’ve now got 7,000+ recordings).

Real‑time sentiment snapshots that show up in strategy conversations — not a dusty survey PDF.

Enterprise‑grade hardening (SSO, deletion with re‑index, audit logging) ready for scale.

Fast delivery. The initial investment delivered enterprise‑grade, AI‑driven insight in ~10 weeks.

Final Musings

You can steal this pattern easily. Yes, it’s a museum exhibit. But it’s also a repeatable blueprint for any big org:

Make it delightful to speak.

Make it safe to store.

Make it trivial to ask good questions later.

Then close the loop — publicly. That’s how you build trust with a workforce the size of a small country.

About the Author:

Sam Hilsman is the founder and CEO of CloudFruit®. When I’m not wrestling APIs or debugging integrations, I write some blogs and try not to make the same mistake twice. (Emphasis on try)